Differences between AI and Generative AI

Contents

Artificial intelligence vs. generative AI[edit]

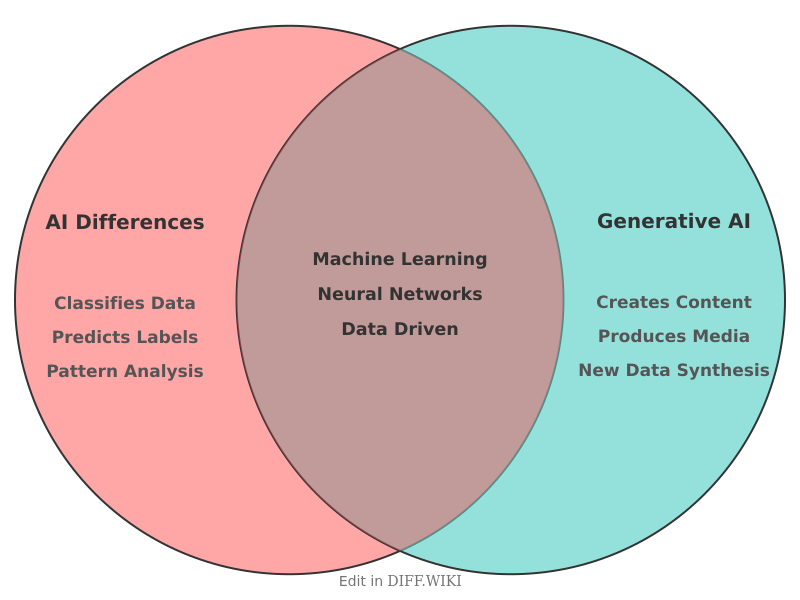

Artificial intelligence (AI) is a field of computer science that develops systems to perform tasks requiring human-like cognitive functions, such as reasoning, learning, and problem-solving. While the term is often used broadly, it encompasses various subfields, including machine learning and deep learning. Generative AI is a specific subset of artificial intelligence designed to create new content, such as text, images, or audio, by learning the underlying patterns of existing data.

The primary distinction between traditional AI and generative AI lies in their output. Traditional AI systems are often "discriminative," meaning they classify data or make predictions based on recognized patterns. For example, a spam filter identifies whether an email is unsolicited, and a medical AI might flag anomalies in an X-ray. In contrast, generative AI uses its training to produce novel outputs that did not exist in the original dataset.

Comparison of AI types[edit]

| Feature | Traditional AI | Generative AI |

|---|---|---|

| Primary Goal | To analyze, classify, or predict. | To create new, original content. |

| Output | Labels, scores, or decisions. | Text, images, code, or media. |

| Methodology | Discriminative models. | Generative models (e.g., Transformers, GANs). |

| Interaction | Often automated and background-process oriented. | Frequently conversational or prompt-based. |

| Data Usage | Identifies boundaries between data points. | Learns the distribution of data to replicate it. |

| Examples | Fraud detection, chess engines, recommendation systems. | ChatGPT, Midjourney, GitHub Copilot. |

Discriminative vs. generative models[edit]

Most traditional AI applications rely on discriminative models. These models calculate the probability of a specific label given a set of input data. A facial recognition system, for instance, compares an input image against a database to determine if there is a match. These systems are evaluated based on their accuracy in identifying or categorizing known entities.

Generative AI utilizes models that estimate the probability of the data itself. Large language models (LLMs) use a transformer architecture to predict the next token in a sequence based on the context of previous tokens. This allows the system to assemble sentences that are grammatically correct and contextually relevant. Image generators, such as those using diffusion models, start with random noise and iteratively refine it into a coherent image based on a text description.

Development and data requirements[edit]

Both categories of AI require large datasets for training, but their requirements differ. Traditional AI often needs labeled data to learn specific tasks. For a supervised learning model to recognize a cat, it must be shown thousands of images labeled as "cat."

Generative AI models are typically trained on massive, unlabeled datasets using self-supervised learning. By ingesting large portions of the internet, these models learn the statistical relationships between words or pixels. This broad training enables them to perform a wide variety of tasks without being specifically programmed for each one. However, this approach also introduces risks, such as the production of factually incorrect information or "hallucinations," because the model prioritizes statistical probability over factual verification.